The Data4Mobility Hackathon, organized under the SEDIMARK project between the 23rd and 25th of September, successfully brought together creative minds to explore innovative solutions using the SEDIMARK Marketplace and data platform.

A total of 34 participants registered for the event, forming teams of up to two people. The hackathon kicked off with an opening ceremony attended by the Mayoress of Santander and the Vice-Chancellor for Research and Innovation from the University of Cantabria. Participants then joined a three-hour introductory session, where they learned how to use the SEDIMARK tools available for their projects.

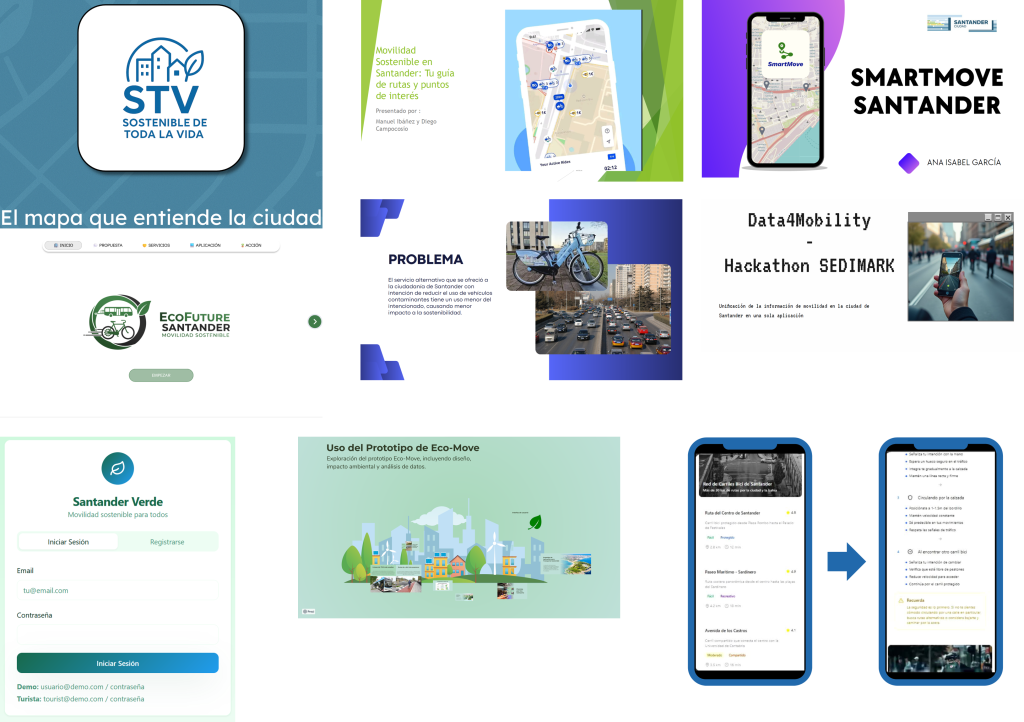

Over the two-day competition, participants worked intensively on their ideas, supported by members of the SEDIMARK project team. Out of all participants, eight teams (13 people) reached the final stage and formally presented their projects.

Following the event, a feedback survey revealed very positive results:

From a technical perspective, some performance and scalability challenges were observed early on when multiple users accessed demanding features simultaneously. These issues were quickly resolved by increasing computational resources.

Overall, the hackathon proved to be an excellent testing ground for the SEDIMARK Platform, offering valuable insights from real users and demonstrating the potential of integrating diverse datasets within a single marketplace.